Bridge the gap between data science and cloud infrastructure. Learn to build scalable ML pipelines, manage model lifecycle, deploy real‑time inference endpoints, and monitor models in production — all using leading cloud AI services.

✔ 60% hands-on MLOps labs • 20+ real‑world projects • Official-style practice exams • Capstone project (end‑to‑end recommendation system on AWS/Azure) • 24/7 cloud lab access.

SageMaker, Azure ML, Vertex AI, Kubeflow, MLflow, Feature Stores, Model Registry, Serverless Inference (Lambda + API Gateway), Docker, Kubernetes, Terraform, CI/CD for ML.

Basic knowledge of Python, pandas/scikit-learn, and cloud fundamentals (any cloud). No prior MLOps experience required.

Build a fraud detection API, a real‑time sentiment analysis pipeline, a scalable image classification service, and a model monitoring dashboard.

Exam vouchers, mock tests, resume review, portfolio development, and interview preparation for ML Engineer / MLOps Engineer roles.

Tailored AI/ML upskilling for teams and enterprises

Choose from AWS SageMaker, Azure ML, or multi‑cloud MLOps tracks.

Hands-on practice in isolated cloud accounts with cost controls.

Monitor progress and skill gaps with detailed analytics.

Volume discounts for teams of 10+, plus pay-as-you-go options.

Dedicated cloud AI engineers to assist your learners anytime.

Single point of contact for seamless training delivery.

Get a custom quote for your organization's MLOps training.

From Model Training to Production‑Ready MLOps

Build, train, and deploy models using fully managed AI platforms. AutoML, hyperparameter tuning, and model explainability.

Design reproducible ML pipelines using Kubeflow, TFX, or Azure ML Pipelines. Version data, code, and models with DVC and MLflow.

Deploy models as real‑time REST APIs (SageMaker endpoints, Azure ML online endpoints, Vertex AI predictions). Optimize with serverless (Lambda + API Gateway).

Build and consume feature stores (SageMaker Feature Store, Feast) to serve consistent features for training and inference.

Detect data drift, concept drift, and model performance decay using cloud monitoring tools (CloudWatch, Azure Monitor, Evidently AI).

Provision ML environments using Terraform, AWS CDK, or Bicep. Implement CI/CD pipelines for model retraining and deployment.

Ideal Candidates for AI/ML Cloud Engineering Certification

Designed for professionals who have foundational knowledge of Python and machine learning concepts. This program bridges the gap between model development and production deployment, giving you the confidence to pass top AI/ML cloud certifications (AWS Certified Machine Learning – Specialty, Azure Data Scientist Associate, or Google ML Engineer) and excel in MLOps roles. Average salaries for AI/ML Cloud Engineers in India range from ₹10 Lakhs to ₹25+ Lakhs per year.

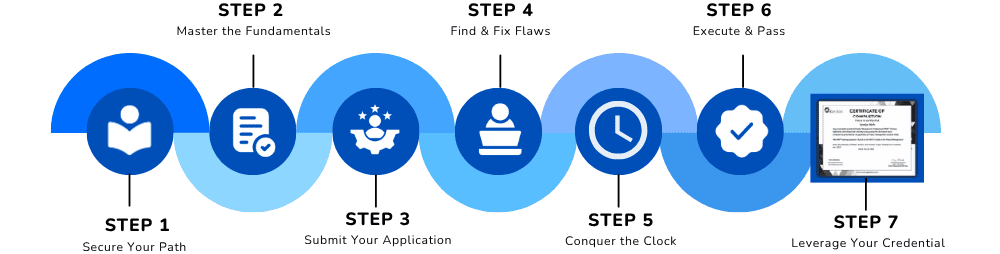

Your Step‑by‑Step Path to Production ML

Master cloud AI services, understand MLOps lifecycle, and set up your first end‑to‑end ML pipeline on a cloud platform.

What You Need Before You Start

Objective: To certify your ability to design, implement, and maintain production‑ready machine learning systems on cloud platforms. Candidates should have:

Comfortable with pandas, NumPy, scikit‑learn, and basic ML algorithms (linear regression, classification, clustering).

Familiarity with cloud concepts (IaaS, PaaS, SaaS) and ability to navigate a cloud console (AWS/Azure/GCP) is helpful but not mandatory.

No prior production ML experience required — we start from fundamentals and quickly progress to advanced MLOps patterns.

Comprehensive AI/ML cloud engineering modules aligned to industry certifications

Compare managed ML services, choose the right platform, and set up your cloud AI environment with IAM and cost controls.

Use cloud data services (S3, Azure Blob, BigQuery) and processing frameworks (Spark, Dataflow) for large‑scale feature engineering.

Leverage SageMaker Autopilot, Azure Automated ML, or Vertex AI Tables to build high‑quality models with minimal code.

Log parameters, metrics, and models. Compare runs and select best candidates for deployment.

Deploy models as scalable REST APIs using SageMaker endpoints, Azure ML online endpoints, or Vertex AI predictions.

Package models as lightweight containers or use Lambda layers for cost‑effective, low‑latency inference.

Build reusable, scalable ML pipelines with components for data ingestion, training, evaluation, and deployment.

Automate model retraining and deployment using GitHub Actions, GitLab CI, or Jenkins with model registry integration.

Implement SageMaker Feature Store, Feast, or Azure Feature Store to serve consistent features online and offline.

Use DVC for dataset versioning and model registry for reproducible training runs.

Monitor data drift, concept drift, and model performance using SageMaker Model Monitor, Azure Monitor, or Evidently AI.

Set up CloudWatch alarms, trigger retraining pipelines, and implement A/B testing for model traffic.

Provision SageMaker notebooks, training jobs, endpoints, and storage using Terraform modules.

Declarative infrastructure for Azure ML workspaces and AWS SageMaker resources.

Use SageMaker distributed training, Azure ML parallel run, or Ray on Kubernetes for large models.

Provision GPU instances (AWS P4d, Azure NCas, GCP A2) and optimize training cost with spot instances.

Deploy portable ML pipelines using Kubeflow on EKS, AKS, GKE, or on‑prem.

Convert models across frameworks and deploy to any cloud inference platform.

Build a complete recommendation engine: feature store, automated retraining, deployment, monitoring, and A/B test.

Practice with official‑style questions for AWS ML Specialty / Azure Data Scientist Associate / Google ML Engineer exams.

Lifetime Access

Real MLOps Projects Included

Mentor Support

Practice Assignments

Certificate Preparation

Join 12,000+ successful AI engineers who accelerated their careers with our production ML training. The demand for MLOps and cloud AI skills has grown 300% in the last two years.

✅ Limited seats available for the upcoming batch • EMI options available